valstechblog/molloy-detector on GitHub

valstechblog/molloy-detector on GitHub

The motivating user story

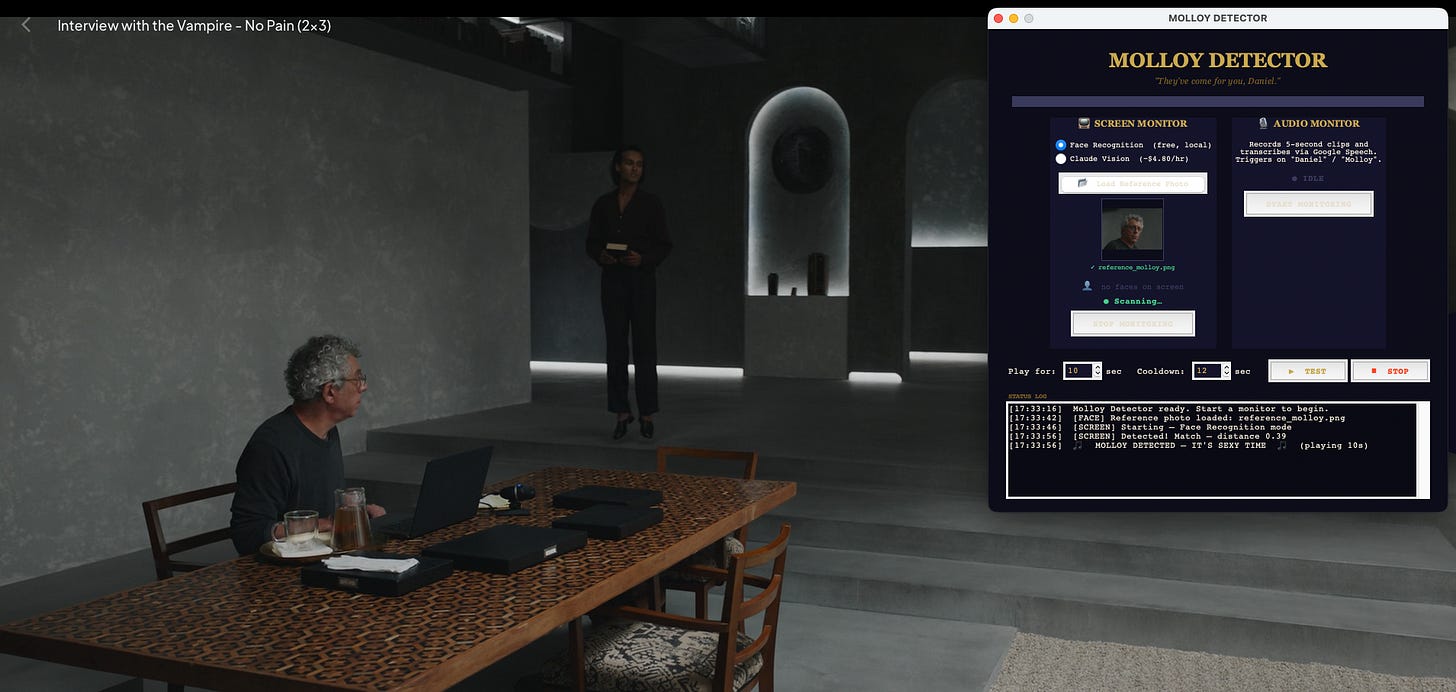

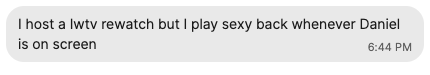

On Monday, May 4, 2026, Jenny sent me this use case (referring to Daniel Molloy played by Eric Bogosian in AMC TV series Interview with the Vampire):

That same night I watched Jenny go into an episode with SexyBack queued up on her phone ready to play, but she quickly became so absorbed in the dialogue and action that she forgot to press play at least 50% of the time Daniel came on screen. Even more concerning from an end user experience perspective: she had to manually stop and rewind the track each time to prepare for the next instance, losing precious time and attention she could have used to continue monitoring on-screen appearances.

I set out to solve this problem once and for all.

20 minutes with Claude Code

On Tuesday, May 5, 2026, ~5:00-5:20pm PT:

-

Seed prompt. I prompted Claude Code: “build a functioning UI that you can set to listen to audio or monitor a screen. when an actor (in this case, Daniel Molloy from Interview with a Vampire) comes on screen or starts speaking in detected audio, play a certain sound bite (in this case, sexyback by justin timberlake)”

-

Claude is a better UI designer than I am. Claude generated a prettier UI than anything I ever compiled and ran locally in my at least two years of full-time computer science coursework and at least five years of full-time professional software engineering. Stylistically, I did not modify this UI at all and pushed directly to production.

-

Like many humans, Claude can struggle to parse an unstructured instruction stream. I asked Claude to “listen to audio… when… in this case, Daniel Molloy… starts speaking in detected audio”, which Claude interpreted as listening to literal audio triggers “daniel”, “molloy”, “mr. molloy”, “daniel molloy” and also added “the journalist” for good measure (evidence of Claude’s wider training, since I did not mention Molloy’s role in the show). Since Molloy is neither Siri nor Alexa and has no default wake word in the show, this was as good a detection as any. Reasoning that audio detection is lighter weight than facial recognition and that power users could go into the source code to modify these triggers themselves, I did not further refine the audio detection module and pushed it to production.

-

Claude is, by default, not cost-conscious. Functionally, Claude needed some direction. Its first solution for screen monitoring facial recognition was to periodically take a screenshot (fine) and send screenshots to Claude Vision for face matching with the request “Is Daniel Molloy (Eric Bogosian) visible?”. With a default 3-second screenshot interval over average 60 minutes per episode, this meant 1K+ screenshots per episode. Even before estimating any additional token spend beyond just a baseline one image per request sent to Claude Vision, this was already approaching $5 per episode (Sonnet 4.6 rates). That simply would not stand, as known potential users of this software complained about recent Netflix price increases from $7.99 to $8.99 per month. I prompted Claude to add a cost estimate to the UI for Claude Vision and to find cheaper methods.

-

Claude makes a reasonable cost-functionality tradeoff. Claude proposed less frequent API calls to reduce cost, which was sensible but would sacrifice a quality standard (low-latency facial detection) I wanted to uphold for this product. Claude also proposed a local solution to completely eliminate the need to make API calls, but this required adding a UI component to have the user to upload a reference photo that could then be used as input to the

face_recognitionPython library. -

v0.0: User testing and refinement necessary. Now I had a working Python program to open an UI, requiring an exactly-named

assets/sexyback.mp3in the source code folder and a reference photo to be uploaded in UI if the user wanted to use facial recognition. I ran the Python program locally from command line to open MOLLOY DETECTOR (impressively, Claude was able to one-shot match the ethos I had hoped to convey in UI design), provided necessary local screen monitoring permissions, uploaded a reference photo (a screenshot from the show), and started an episode of Interview with the Vampire. MOLLOY DETECTOR was unable to identify the back of Daniel Molloy’s head, which was reasonable. When his face came on screen, MOLLOY DETECTOR printed a delightful log message and started playing SexyBack. I was elated. However, after a few seconds, SexyBack started to drown out on-screen dialogue. After 10 seconds, I realized there was no functionality to stop or fade-out the track. Since I had uploaded the entire 3+ minutes of SexyBack toassets/sexyback.mp3, MOLLOY DETECTOR was dutifully playing the entire 3+ minutes of SexyBack over critical, dramatic dialogue. -

v0.1: Add audio auto-stop and UI options. I gave Claude the precise, technical feedback “is sound cooldown working” to which Claude responded “Yes, it’s working correctly.” However, the rationale provided referenced code for detection cooldown, so I added additional context “the sound isn’t stopping”, which Claude understood as instruction to add configurable auto-stop functionality (defaulting to 10 seconds) and a STOP button to the UI for the user to hard override. This was enough for me. I re-ran MOLLOY DETECTOR, SexyBack started playing when Molloy’s full face came on screen, and stopped after a reasonable time.

-

v0.2: Addressing lags in on-screen face recognition. Not being able to detect backs of heads was reasonable, especially since I only provided one reference photo of Daniel Molloy mid-speech. Given the 3-second detection interval I expected a small amount of lag. But there were instances where Molloy was on screen for more than a few seconds and I did not hear SexyBack. I also noticed that the program did not detect any faces on screen when there were multiple faces on screen. I told Claude “can’t tell when any faces are on screen” and Claude added a color-coded real-time UI diagnostic “no faces on screen” / “faces found / no match” / “match - molloy detected” in addition to existing logs. Claude also suggested experimenting with tolerance used as an input to

face_distance, which had defaulted to 0.55. Per Claude I set this to 0.60, which theoretically would not actually solve any of the detection issues I raised but upon next run worked reasonably well. I tabled this to re-visit later.

40 minutes with myself and my text editor

At the 20-minute development mark, I pushed the source code to GitHub and started to write documentation.

I do not use AI tools to write since I prefer to write from the heart (even, and usually, at my own expense), so it took me the rest of the hour to write the README. Notably, I reinforced while writing a quickstart user guide that MOLLOY DETECTOR is unusable for a non-technical user and is far from ready to be packaged into a consumer product as-is. It requires command line proficiency, uploading likely copyrighted material to specific filepaths, and granting screen recording permissions that would and should face hurdles in any app store worth its salt in security practices. I pushed copyrighted audio (SexyBack) to the GitHub repository because I put my customers first and I am not trying to profit from this, but that is a huge no-go in the rest of the industry.

I filed the most pressing functionality improvements as issues (UI accessibility, runnable executable, uploading audio to playback and not requiring users to upload to assets/sexyback.mp3 exact filematch, and testing Claude Vision and audio detection).

What this means for all of us

MOLLOY DETECTOR is not ready to be a Chrome extension. It is not ready to go on any app store. And while Claude Code, even out of box without significant MCP configuration, gets something reasonable up and running in a short amount of time, it can also lead an inexperienced developer astray.

For most software engineers, product managers, and industry practitioners in San Francisco and New York reading this blog, this isn’t news. You have little trouble guiding Claude, Codex, Cursor to accelerate your development; you naturally integrate them into your daily work in an informed, controlled way. You understood MCPs immediately as a conceptual API. That’s because you’re the experts. You’ve built software from scratch, you’ve made cost and design trade-offs with definitionally irrational human beings before there were standardized recommendations. You are able to appreciate the situations where Claude can one-shot an implementation and you recognize the limitations it has in building extensible, maintainable software without your explicit enforcement.

You are also the exception in the massive population starting to rely on tools like Claude Code to build products that humans use.

One reason why I continue to use Uber instead of Waymo is that I learn a lot from drivers. My driver on Monday gave me a fairly well-informed take on OpenAI based on his conversations with riders in San Francisco and the tech podcasts he listens to while driving. He was also the first adopter of AI tools in his day job for a catering company and taught his coworkers how to use Copilot. His biggest criticism of Copilot? “You can still tell when writing is AI-generated.”

Personally, I don’t plan to take MOLLOY DETECTOR too much further. As with many things, I feel I’ve gotten at least 80% of the learning from the first 20% of my time with this problem. We’ll stress test this software at an upcoming watch party, which may reveal additional urgent issues.

The larger unknowns and opportunities for longer-term economic and social progress are in studying the ways coding and AI assistants influence the ways we learn and work. To me, this is a permanent work in progress. We already know the risks of the data and methods we use to train our machine counterparts. We know how badly it can go wrong. We have a sense that we need to establish and enforce safety guardrails and checks. The balance I am constantly seeking and revising my own calculus around is the trade-off between what we learn from these tools we’ve trained to be more competent than any single human in many regards, and how much we’re willing to give up in developing our knowledge and reasoning from first principles in the name of productivity.